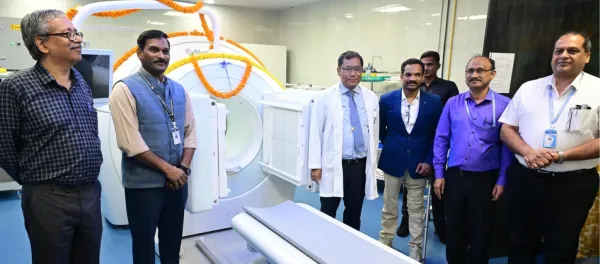

How AI is Quietly Transforming Radiology in Hospitals

Federated learning is an early-stage approach hospitals are exploring to build AI that works reliably across different patient populations, allowing AI models to train across multiple hospital sites without patient data ever leaving each institution.

Every day, radiologists review hundreds of medical images, CT scans, MRIs, and X-rays, looking for signs of disease. It is detailed, demanding work, and the volume keeps growing. Artificial Intelligence has become one of the most significant shifts in hospital-based radiology over the past decade. What began as basic computer-aided detection in the 1990s, tools that flagged suspicious areas on mammograms with limited reliability, has evolved into sophisticated systems capable of analyzing thousands of images in seconds and identifying findings that a human eye might overlook after hours of reviewing scans.

Unlock the Future of Digital Health — Free for 60 Days!

Join DHN Plus and access exclusive news, intelligence reports, and deep-dive research trusted by healthtech leaders.

Already a subscriber? Log in

Subscribe Now @ ₹499.00Stay tuned for more such updates on Digital Health News